AI Growth Systems for Founders: A Practical Guide to Build, Scale, and Measure

A practical guide for founders on AI growth systems for founders, with roadmap, stack audit, ROI model, ad tactics for Meta and TikTok, and governance best practices.

Mar 10, 2026

Founders are under pressure to grow faster while keeping costs under control and brand trust intact. AI can multiply output, but only when it is built into a coherent growth system. This guide shows how to design AI growth systems for founders with technical patterns, a 90-day roadmap, unit economics, ad strategies for Meta and TikTok, and governance rules you can apply right away.

The dilemma: speed vs control

Every founder faces the same tension. You can spin up automations fast and push to market, or you can move deliberately and risk losing first-mover advantage. The sweet spot is predictable speed with clear guardrails.

Key questions to answer before you scale with AI:

Where should AI act autonomously and where should it assist a human

How will you measure model performance and business impact

Who owns the data, the model, and the business risk

Treat AI as a system - not a single tool. A reliable system defines boundaries, ownership, and metrics from day one. That approach reduces brand risk and avoids fragile automations that break when scale or data shifts.

What "AI growth systems for founders" actually means

When I say AI growth systems for founders I mean a modular, measurable pipeline that converts inputs to repeatable growth outcomes. Inputs include first party data, creative assets, and business rules. Outputs include leads, conversions, retention improvements, and lower CAC.

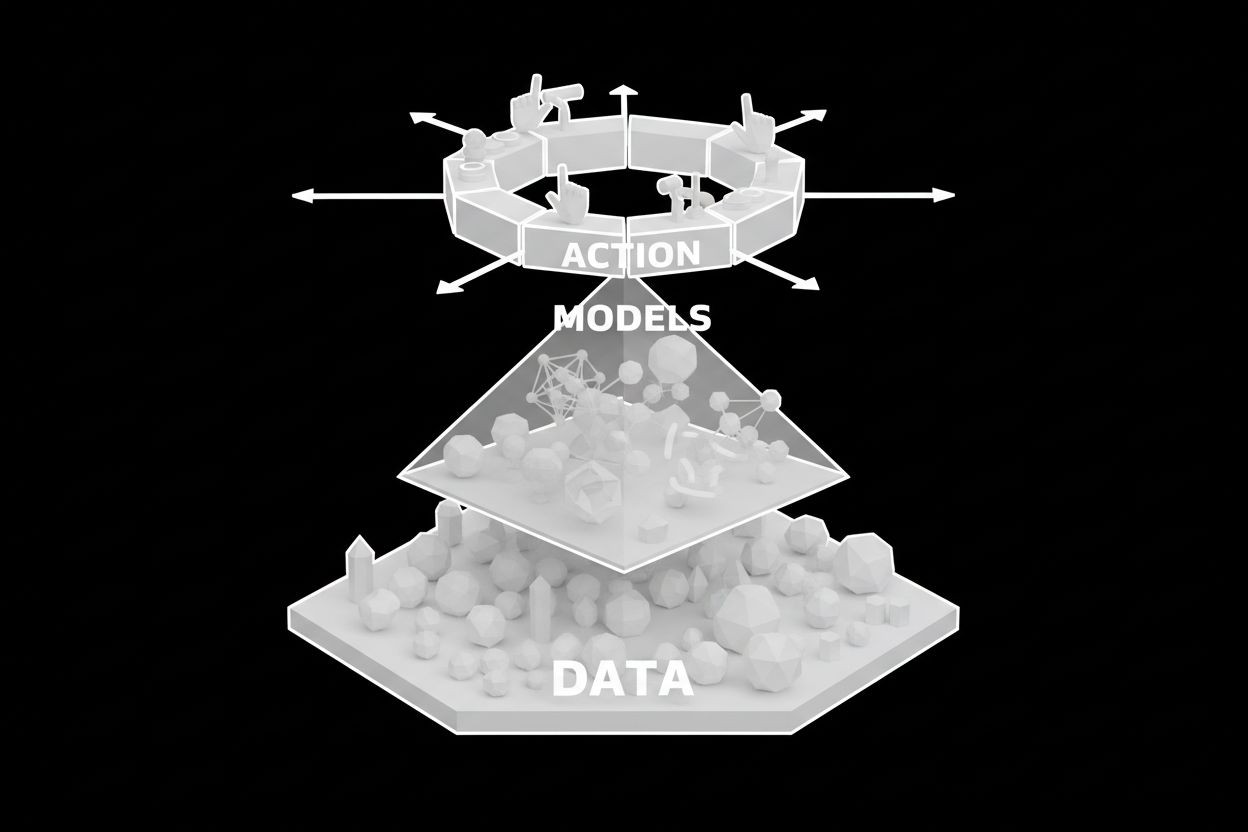

A true growth system connects these layers:

Data and instrumentation - clean events and user profiles

Models - from embeddings to LLMs to custom classifiers

Action layer - ads, chat agents, emails, and content pipelines

Measurement - causal tests and cohort analysis

Governance - safety checks, rollout policies, and human review

This framework keeps you focused on outcomes instead of chasing shiny tools.

The AI growth stack audit - what to check, what to build

Run an AI growth stack audit before you invest. The audit is a checklist founders can use to evaluate readiness and prioritize work.

Audit steps and remediation actions:

Data hygiene

Check: events tracked, identity stitching, clean user profiles

Fix: add server side events, backfill missing keys, use a single user ID

Model selection criteria

Check: cost per token, latency, fine-tuning options, safety filters

Fix: benchmark GPT-4o or similar for high-quality responses, test Claude and open-source for lower cost inference

Vector store and retrieval

Check: embeddings for knowledge retrieval, freshness strategy

Fix: choose a vector DB like Pinecone or Milvus and set TTL for stale docs

Action integrations

Check: can models call APIs securely, is logging enabled

Fix: add a middleware layer that records model decisions and supports human override

Monitoring and rollback

Check: alerting for increased fallback rates or user complaints

Fix: create phased rollouts and automated rollback triggers

Minimal working stack for founders wanting quick wins:

Instrumentation: server events to a CDP, user ID sync

Storage: cloud DB plus vector store for embeddings

Models: hosted LLMs for prototyping, self-hosted for cost at scale

Orchestration: small service to route prompts, call APIs, and log outputs

Action: ad platform APIs, chat agent channels, email provider

Example integration pattern (pseudocode for prompt enrichment and retrieval):

This pattern keeps retrieval and generation separate so you can swap models without rearchitecting the system.

90-day AI implementation roadmap

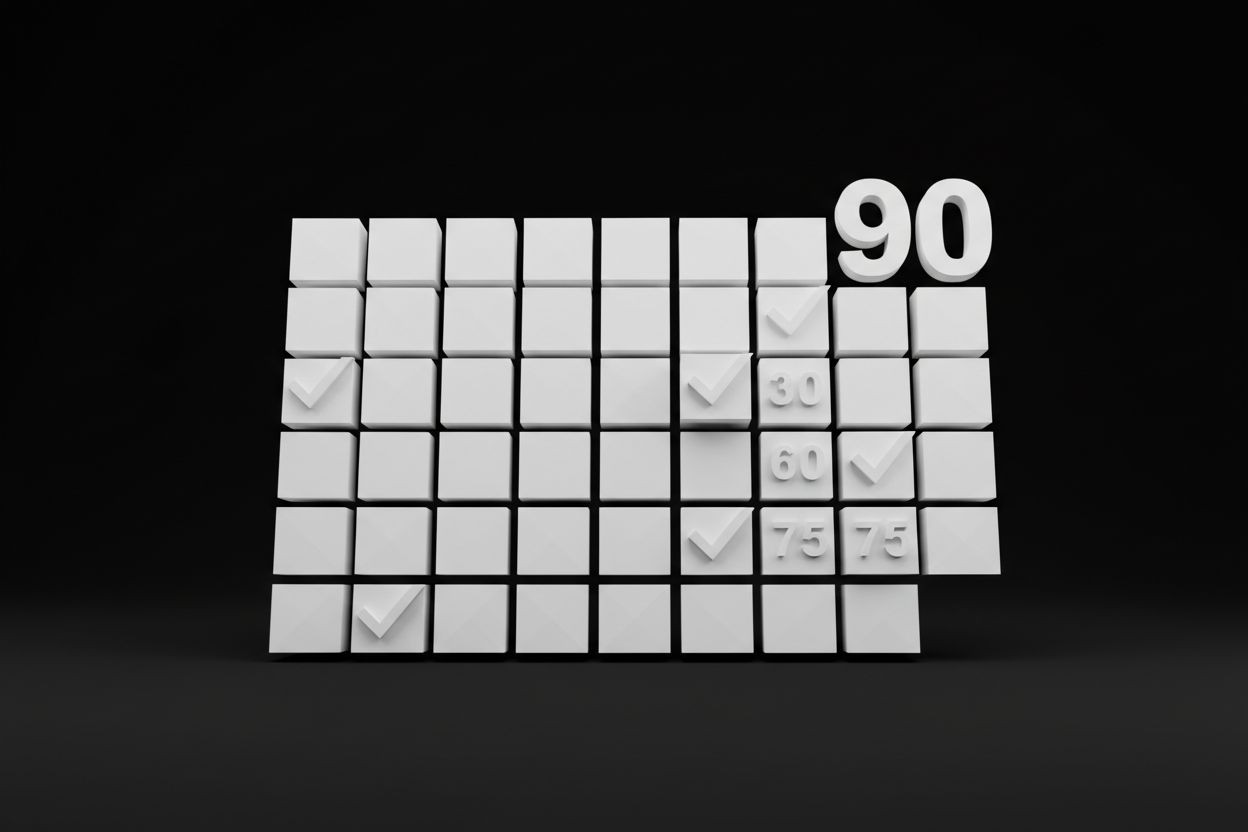

A focused 90-day plan converts the audit into business results. Week by week:

Phase 1 - Weeks 1 to 3: Discovery and instrumentation

Map growth funnel and identify 2 highest impact use cases (lead capture, ad creative, chat triage)

Complete event tracking and identity stitching

Run baseline metrics for CAC, conversion rate, average order value, LTV

Phase 2 - Weeks 4 to 7: Prototype and measure

Build a prototype for each use case with hosted LLMs

Run A/B tests with control groups to measure lift

Implement logging, fallback, and human review flows

Phase 3 - Weeks 8 to 12: Harden and scale

Migrate production traffic via phased rollout

Optimize prompts, batch inference for cost savings

Integrate models with ad pipelines for creative generation and targeting

Deliverables at day 90:

Two validated AI automations with measured lift

Cost and latency profile for each automation

Playbook for scaling and governance checklist

This plan is designed for fast learning with low risk. If a prototype underperforms, iterate or reallocate budget to the outperforming use case.

Unit economics and ROI for AI investments

Founders need clear rules for how much to invest and how to measure payback.

High level guidelines:

Start with 1-3 percent of projected marketing spend for AI experiments

If an AI automation improves conversion rate by 10 percent and CAC drops by 8 percent, expand budget to 5-10 percent of the channel spend

Account for recurring costs: inference, vector store, storage, monitoring

Simple ROI example:

Monthly ad spend: $50,000

Baseline CAC: $50

New conversion uplift: 10 percent

New CAC: $45

Monthly new customers: 1,000 baseline; with uplift you get 1,111

Incremental customers: 111

Monthly incremental revenue at $200 ARPU: 111 x $200 = $22,200

Monthly AI cost: $2,500

Net monthly gain: $19,700

Track payback period and marginal CAC improvement. Use these calculations to decide whether to self-host models for cost control or stay with hosted APIs for development speed.

Measurement and KPIs beyond vanity metrics

Vanity metrics are easy to report but they do not prove impact. Use these action-oriented KPIs:

Leading indicators

Query to resolution ratio for chat agents

Creative quality score based on CTR and watch time for TikTok

Rate of human escalation or override

Model confidence distribution and fallback rate

Business metrics

Incremental conversion rate and CAC by cohort

LTV uplift attributable to AI-driven personalization

Retention delta for users exposed to AI workflows

Operational metrics

Cost per 1,000 tokens or inference

Model latency P95 and P99

Error rate and automated rollback frequency

Design dashboards that tie model health to business outcomes. For example, if fallback rate rises, flag a potential drop in conversion.

Team and talent strategy

AI growth systems require a mix of strategic and execution talent. Roles to prioritize:

Product owner with growth metrics accountability

ML engineer or MLOps lead for model integration

Full stack engineer for orchestration and APIs

Data engineer for pipelines and instrumentation

Growth marketer to design experiments and read signals

Hiring guidance

Hire for outcome orientation rather than pure research credentials

Keep initial work cross functional and small so learning is concentrated

Consider agency partnerships for ad creative and execution while you build in-house capabilities

Decide early whether to own models or outsource. Owning models can reduce variable costs and provide control. Outsourcing speeds time to market but may limit customization.

Running AI-driven ads on Meta and TikTok

AI can speed creative production, audience generation, and reporting. Use it carefully to preserve brand voice and comply with ad policies.

Tactical steps

Use AI to generate 10-20 creative variants and run creative testing with conversion campaigns

Generate audience clusters from first party data, then expand using lookalike approaches

Automate copy and assets for TikTok and Meta, but include a human review step before launch

Integrate ad performance back into the model training loop so creative improves over time

Practical tips

For TikTok favor short hooks and rapid cuts. Use AI to produce multiple 6 to 15 second scripts

For Meta use AI to create primary text variations and headline tests

Monitor policy compliance especially for claims, regulated categories, and user data usage

If you need a managed route for ad execution, consider a partner experienced in paid ads management to accelerate testing while you build internal automation. See Paid Ads Management - The Social Search for a managed option.

AI chat agents and social media at scale

Chat agents and social automation are high impact for lead gen and retention. Key implementation notes:

Use AI chat agents for qualification, booking, and simple support. Route complex issues to humans

Connect chat agents to your CRM so leads are captured and scored automatically

Automate social posting pipelines but schedule human review for high sensitivity content

Useful integrations

Sync chat transcripts to CRM for lead scoring and follow up

Use automated social media workflows to repurpose content across channels and formats

Capture leads from chat and trigger automated lead nurturing sequences

For hands-on solutions, see Automated AI Chat Agents - The Social Search and Automated Social Media - The Social Search.

Failure case studies and what to learn from them

Failure is the fastest way to learn when you capture the reasons. Here are three short post-mortems founders can use as guardrails:

Over-automation of customer responses

What went wrong: chat agent provided incorrect billing info causing refunds and brand damage

Fix: add a verification step for billing answers and require human sign off for transactions

Blind creative scaling

What went wrong: a campaign used AI-generated claims that violated platform policies and was paused

Fix: include policy validation in the creative pipeline and have a compliance reviewer

Data drift breaks personalization

What went wrong: embeddings grew stale and personalized recommendations dropped conversion

Fix: schedule re-embedding and refresh pipelines with alerts for signal degradation

Document each failure, the detection signal, and the rollback or patch. Keep a short playbook of recovery steps.

Governance, privacy, and compliance

AI systems depend on data. Protecting that data preserves your customer trust and reduces legal risk.

Practical governance steps

Define data retention and deletion policies and enforce them programmatically

Segment production keys and never log sensitive PII to third party model calls

Use server side call patterns and tokenization to minimize exposure

Maintain an approvals log for any model update or prompt change

Make sure legal reviews any use of third party data for targeting. For regulated verticals you may need to add additional controls or avoid certain automated behaviors.

Building a defensible moat with AI

When everyone uses the same models you need other layers to maintain advantage. Foundational moats include:

Proprietary data: customer interactions, product telemetry, and conversion history

Embedding libraries that capture domain knowledge unique to your business

Prompt and evaluation repositories that codify what works for your brand

Tight integrations into product UX that competitors cannot easily replicate

Invest a small fraction of your AI budget into tooling that turns data into reusable assets. Over time these assets drive sustained advantage.

Final rules of thumb and next steps

Three pragmatic rules for founders building AI growth systems for founders:

Start with the highest impact funnel stage and instrument thoroughly

Measure causally and expand budget only after repeatable lift is proven

Build guardrails early so speed does not become a liability

If you want tactical help implementing these systems, you can explore managed and technical options. For automated lead capture and nurture see Automated Lead Generation - The Social Search. To optimize organic reach and discoverability with AI workflows consider Automated SEO - The Social Search. When you are ready to talk specifics you can contact our team to scope a 90-day engagement.

Designing AI growth systems for founders is both a technical and strategic exercise. Treat the work as building a product with experiments, metrics, and clear ownership. Do that and AI becomes a predictable engine for acquisition, conversion, and retention.